For details about what Lambda architecture is, read Introduction to Lambda Architecture.

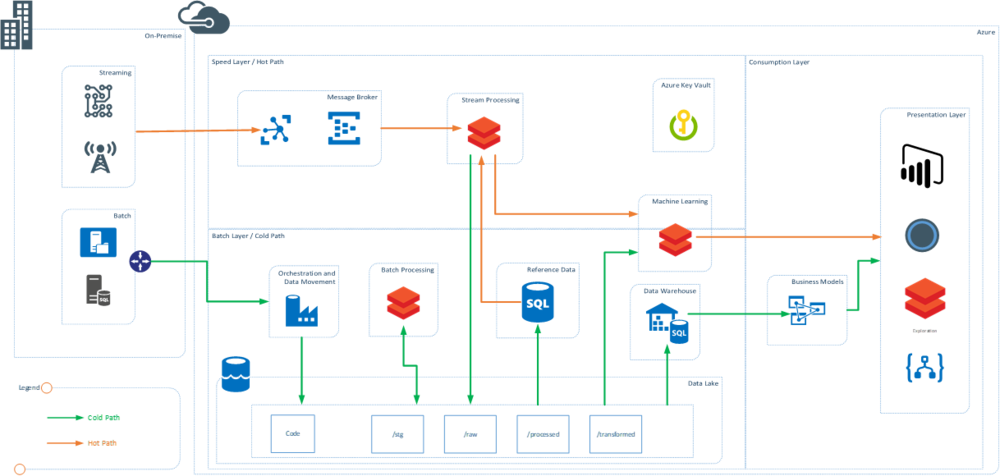

From a technology point of view, Databricks is becoming the new normal in data processing technologies in both Azure and AWS. This post provides a view of lambda architecture with Databricks at front and center. Databricks has capabilities to replace multiple tools, and those are described in detail below.

Note: In this post I wanted to focus on the use of Databricks in a typical lambda landscape. In order to provide the required details, I have abstracted some other aspects of data architecture including Data Governance, Security, MDM, Metadata Management, and Data Quality. These are still key functions of data architecture and have to be planned.

The key benefit of using Databricks is that it is a Spark-based engine with zero management. Using it in multiple places means teams need to be skilled on only one technology.

Batch Processing Using Databricks

Databricks can be used as a great data engineering and batch processing tool. It uses Spark interactive and Spark for batch processing using interactive clusters and notebooks. The clusters can be scaled up on demand and jobs can be orchestrated using external tools via APIs. In Azure, ADF provides native support for Databricks.

One of the key benefits Databricks brings is that developers can use three languages — Scala, Python, and SQL are all first-class citizens. Use of Databricks Delta brings ACID properties to the data lake and makes managing lakes easier.

Databricks can potentially replace: ETL and Big Data tools such as Informatica, SSIS, Hortonworks, Cloudera, EMR (AWS), HDInsight (Azure), Azure Data Lake Analytics (ADLA), Talend, Alteryx.

Stream Processing Using Databricks

The need for stream processing and low-latency processing of data from sources that emit real-time information has been very popular in recent years. Using Databricks and Spark Streaming, this real-time processing of data becomes easier and the need for a separate technology can be avoided. Spark Streaming can subscribe to real-time events, process them at low latency, while using information from reference data sources.

Databricks can potentially replace: Real-time stream processing technologies such as Apache Storm, Azure Stream Analytics, HDInsight, Striim, and Amazon Kinesis.

Machine Learning Using Databricks

Databricks runs Spark, and one of the key components of Spark is MLlib. Organizations can use a wide variety of frameworks supported in Spark and Databricks to create models using distributed compute. These models can also be used in real-time to generate results from a stream. Use of SparkR and PySpark provide distributed parallel processing capabilities using data scientist-familiar languages such as R and Python.

Databricks can potentially replace: ML compute and workbenches such as HDInsight R Server, VMs, EC2, Azure Machine Learning Studio, Dataiku, and other ML workbenches.

Interactive Exploration Using Databricks

Exploration can mean using and consuming prebuilt reports and dashboards — Databricks has capability to do that using interactive dashboards within notebooks. If the enterprise publishes data into a lake/hub, there is a need to explore and dive into the data lake to find what data can be used for business use cases. Databricks interactive clusters and notebooks can be used to query files in the data lake to explore the data.

Databricks can potentially replace: Data exploration tools such as Datameer, Alteryx; data visualizations for real-time and dashboard sharing; data preparation and consumption workbenches such as Talend, Trifacta, Pentaho.

Most organizations are trying to bring Databricks into their technology equation and use it for at least one of the scenarios mentioned above.